Kernel Modules¶

The SUSE SolidDriver Program is focused on the tasks of packaging, delivering, deploying, and supporting kernel modules to be installed on SUSE Linux Enterprise OS’s. Before looking at the details of how kernel modules are provided, it’s important to have a basic understanding of what Linux kernel modules are and how they work, and this section will give a quick overview.

If you’re familiar with Linux kernel modules, you can skip to the section [Third Party Kernel Modules].

What are Kernel Modules¶

Kernel modules pieces of kernel object code that can dynamically loaded into a running instance of a Linux kernel. Not only can the modules be loaded when needed, they can also be unloaded and reloaded [1]. The ability to load sections of kernel code as needed allows for great flexibility when using Linux kernels. Because of the loadable nature they are sometimes referred to as Loadable Kernel Modules (LKM).

The Linux kernel is an extensive program that supports a considerable amount features and functionalities from file-systems, to vast diversities of hardware components. The ability to load only those sections of kernel code that are required for a given installation or workload, allows the kernel to run with a minimal memory footprint.

When new features, or technologies are to be used, new kernel modules can be loaded as needed without rebooting the system. This is a key part of device hot-plugging support in the Linux kernel where device drivers (kernel modules) are loaded as new devices are discovered.

Kernel modules can be built separately from the base kernel code and loaded/tested dynamically and easily on existing kernel installations. This reduces the expensive process of building and deploying complete new kernels with each iteration.

Kernel Modules vs Kernel Drivers¶

The terms kernel module and kernel driver are essentially synonymous, and in this document both terms will be used interchangeably.

To be more precise, one can think of kernel module is a more general term for code that is dynamically loaded into a running kernel instance, and that kernel drivers are kernel modules built with the task of driving or otherwise providing interfaces to entities external to the kernel itself whether it’s file systems, hardware devices, or software applications.

Power and Privilege of Kernel Modules¶

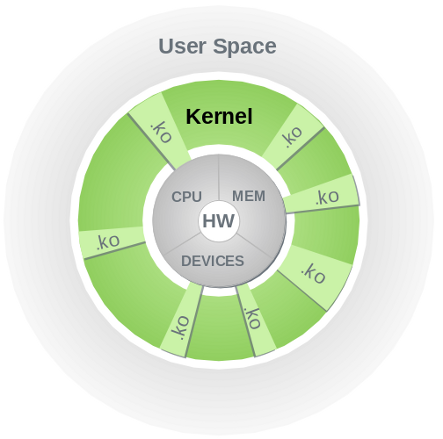

As explained in Anatomy of the Linux kernel [2] the GNU/Linux operating system consists of two major parts, the user space and the kernel. The kernel provides resource management functions to the user space, like device access and process and memory management. Everything running in the kernel has full access to the hardware of the entire machine; there is no protection between the different parts. The lack of protection in the kernel makes the code fast, but at the same time dangerous: a bug in one part can bring down the entire system.

Kernel Modules¶

The illustration above shows the different layers of a running computer system with the hardware resources shown in the center, the user space on the outer ring with the kernel space in-between. The gray slices labeled .ko represent kernel modules. As can be seen in the diagram, there is no separation or layer of protection between kernel modules and the kernel or hardware resources.

The user space builds upon the abstractions provided by the kernel. Everything in the user space is running in the context of a process, and different processes are protected from each other. The main communication layer between the user space and the kernel is the system call interface.

In this document, we focus on the kernel space and the abstractions and mechanisms provided to extend the kerneli’s functionality by adding support for new hardware devices or software features.

Support for different kinds of hardware lives in drivers, which can be compiled directly into the main kernel image or into kernel modules, which can be loaded at run-time. Likewise, features like file systems or network protocols can be part of the main kernel image or they can be implemented in modules. When a module is loaded, it becomes a part of the kernel and gains all the powers that kernel code has.

The Kernel Module Programming Interface¶

The programming interface between the kernel and kernel modules is similar to that of shared libraries [3] in user space: a shared library provides (“exports”) a set of functions and variables. A user-space program that uses those functions and variables loads the shared library into its address space. The library loading code makes sure that the library is properly initialized (“constructed”), and that all references to functions and variables implemented in the library are properly resolved so that the program can use them. Similarly, the kernel exports functions and variables to kernel modules. When a kernel module is loaded, the kernel resolves all references to functions and variables implemented in the kernel so that the module can access them, and then it initializes the module. (In this analogy, the kernel module is in the role of the user-space program.)

For both shared libraries and kernel modules, the term symbol refers to a function or variable, and the term exported symbol refers to a symbol that a shared library or the kernel provides. Just as a shared library can depend on other shared libraries (meaning it uses symbols exported by those other libraries), kernel modules can depend on other kernel modules.

When discussing programming interfaces, the term Application Programming Interface (API) is often used to refer to an interface at source code level, and the term Application Binary Interface (ABI) is used to refer to the binary interface of an application. These two terms are also used in conjunction with kernel modules; there, the abbreviations kAPI and kABI (k standing for kernel) are also encountered.

Kernel Module Compatibility¶

When loading kernel modules, it is essential to ensure that the kernel and modules are compatible. We can ensure this by building the kernel and all modules in the same build which insures all data structures involved are equal. When building the kernel modules separate from the core kernel build, another mechanism is needed to ensure that all the data structures involved are the same.

Historically, the kernel and its kernel modules were all built at once. Back then, it was sufficient to ensure that the version strings of the kernel and of all modules were the same; we would just need to ensure that the version string changed whenever an exported symbol became incompatible. When people started building their own kernel modules separately from the rest of the kernel, this weak form of compatibility checking often resulted in breakages. Modules would become incompatible and still load but crash the kernel upon first use of the module, corrupt other parts of the kernel and cause crashes there, or worse, even cause data corruption. Kernel modules built separately from the rest of the kernel could not be handled in a reasonable way with kernel version string comparisons.

To solve this problem, a mechanism called modversions was invented, which assigns a checksum to each exported symbol. The checksums are computed based on how a symbol is defined in the source code; they include the definitions of all data types reachable from each symbol. Instead of making sure that the kernel and module have the same version, the kernel makes sure that the checksums of all the symbols that the module uses match. Modules with checksum mismatches are rejected.

This ensures that a symbol or anything reachable from that symbol changes. However, it might also generate false positives, because a checksum might change even when a module is not actually affected by the change causing the checksum change.